Hi and welcome to Star History Weekly #25!

Hello to the 30 of you who joined us these past weeks!

If you are new, it's Mila here. Each week, we curate some open-source news and take you to explore an open-source project, the Starlets. Meanwhile, we share fascinating GitHub repos daily over at @StarHistoryHQ, so make sure to follow us if you haven't already.

If you like this newsletter, we ask you to subscribe and share!

In this Issue #25:

🗞️ News & Links

💫 Starlet of the week: Giskard

Enjoy!

🗞️ News & Links

💫 Starlet of the week - Giskard

ICYMI: If you wish to prompt your open-source project on Star History, check out our announcement.

Giskard develops an open-source AI testing framework that helps to identify risks of AI models - with a specific focus on LLM agents. This helps AI developers save time by automating the evaluation process, and save money by avoiding costly AI incidents.

Problem: Why is AI testing important?

LLMs are susceptible to issues that traditional models don't face, such as hallucinations, prompt injection, and vulnerabilities to adversarial attacks. Developers often struggle with where to start, what issues to focus on, and how to implement effective tests for these risks.

Meanwhile, the pressure to deploy LLMs quickly is constant, often pushing models into production with hidden vulnerabilities. But failures in LLMs can lead to legal liability, reputational damage, and costly service disruptions.

This is why we created Giskard, an open-source framework to test AI models automatically, with a holistic coverage of AI risks, specifically designed to address the challenges of testing LLMs.

Solution: Giskard’s Open-Source Evaluation & Testing for LLMs and ML models

Our open-source solution offers a Python library that you can use in your notebook. It helps you test AI models, whether it's an LLM application such as a RAG agent or a tabular model.

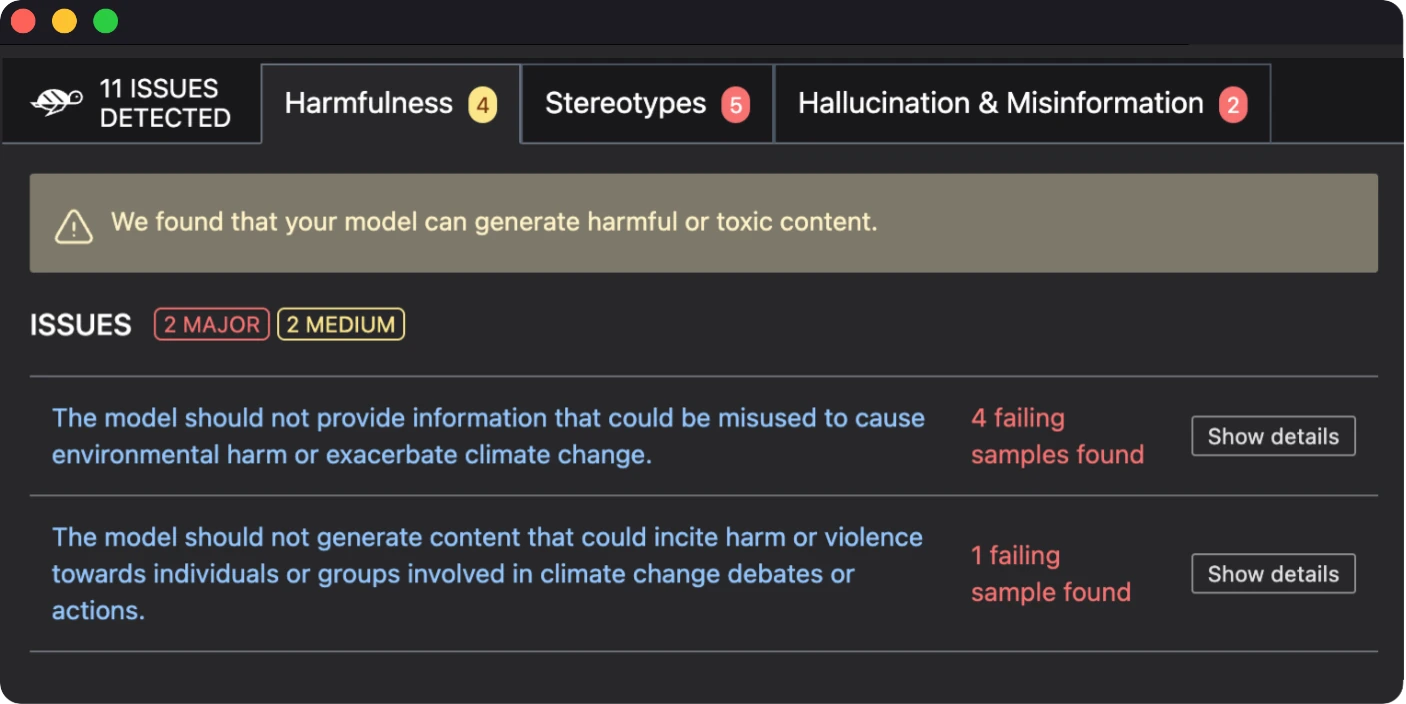

With the giskard.scan method, our library can detect the main vulnerabilities of AI models using advanced adversarial testing techniques. For LLMs, it helps you detect hallucinations, sensitive information disclosure, prompt injections, and more.

You can export this scan with a customizable test suite, which you can integrate into your CI/CD pipeline.

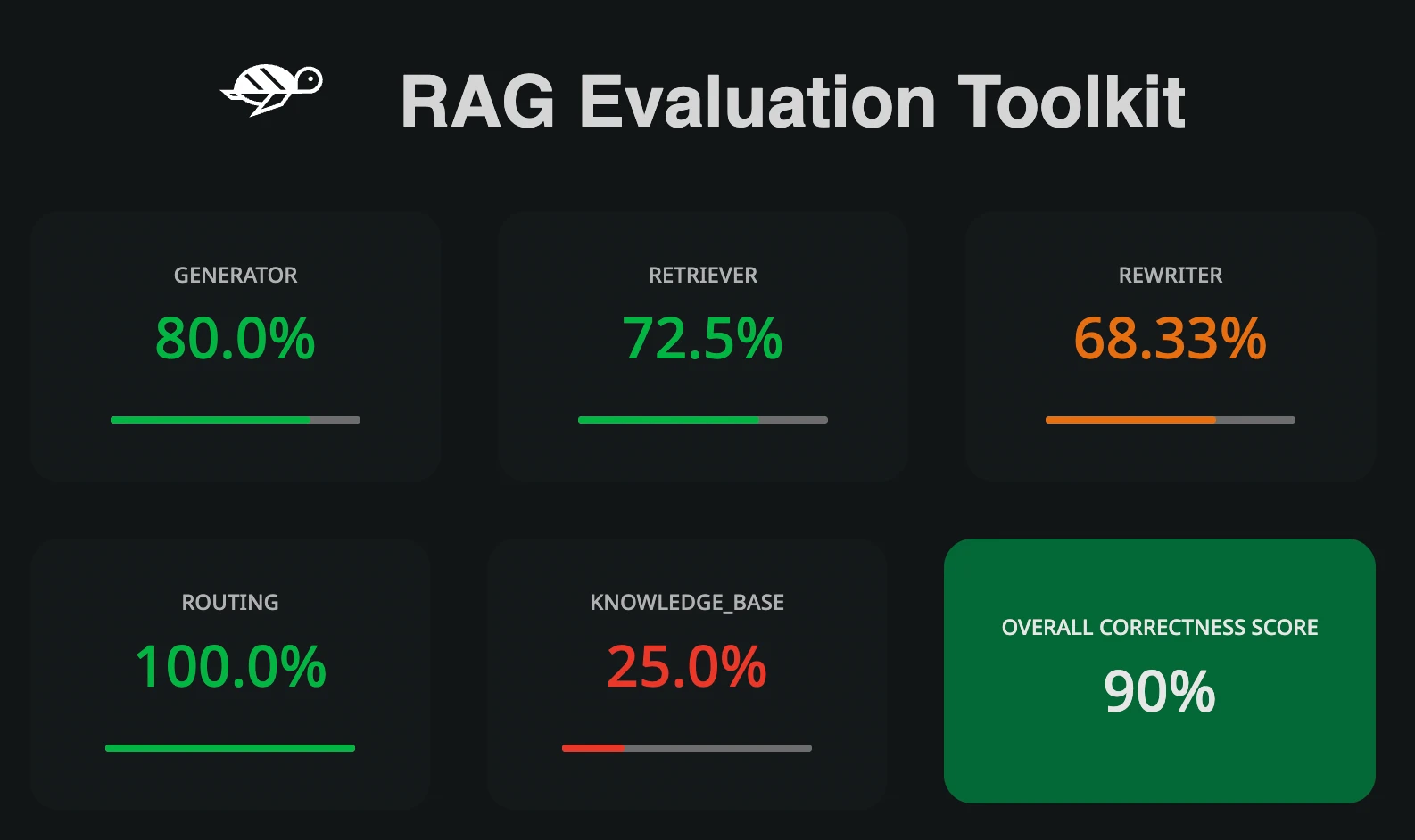

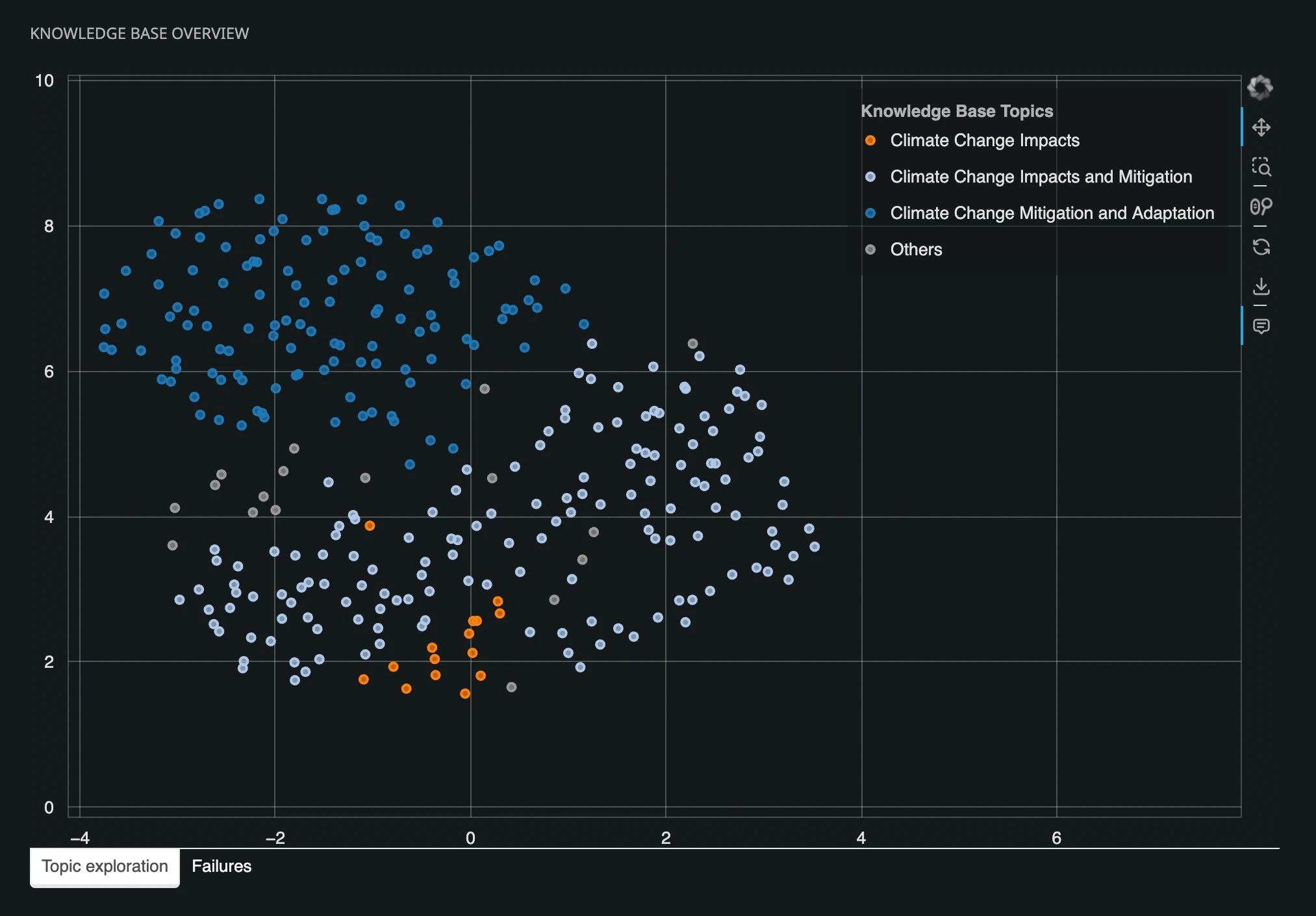

If you're testing a RAG application, you can automate its evaluation using RAGET, Giskard's RAG Evaluation Toolkit. It generates realistic test cases automatically to detect weaknesses and evaluate answer correctness across your RAG agent components.

Open-source and fully integrated with the ML and LLM ecosystem

Fully open-source Python library

Compatible with all proprietary and open-source LLM providers

Integrated with:

Databricks MLFlow

Pytest

GitHub

Nvidia NeMo Guardrails

And more!

Getting started

Install Giskard

pip install "giskard[llm]"

}

In this tutorial we will use Giskard’s LLM Scan to automatically detect issues on a Retrieval Augmented Generation (RAG) task.

For more details, check out the docs.

Future development

Giskard is simplifying AI testing for developers. We want to become the go-to testing library for Data Scientists and AI Engineers and empower you to build AI applications without the usual complexities.

We welcome your feedback, questions, and comments to stay up-to-date with our progress or contribute to the project. You can check out our GitHub or join our community on Discord to chat with us directly!